OrigoAI - Powering AI Infrastructure with Datacenters & GPU Cloud

Enterprise Ready | Resilient | Scalable

Datacenter & Cloud-Based Solutions to Accelerate Your Data Analytics & Training, Inference, and Application Framework Workloads.

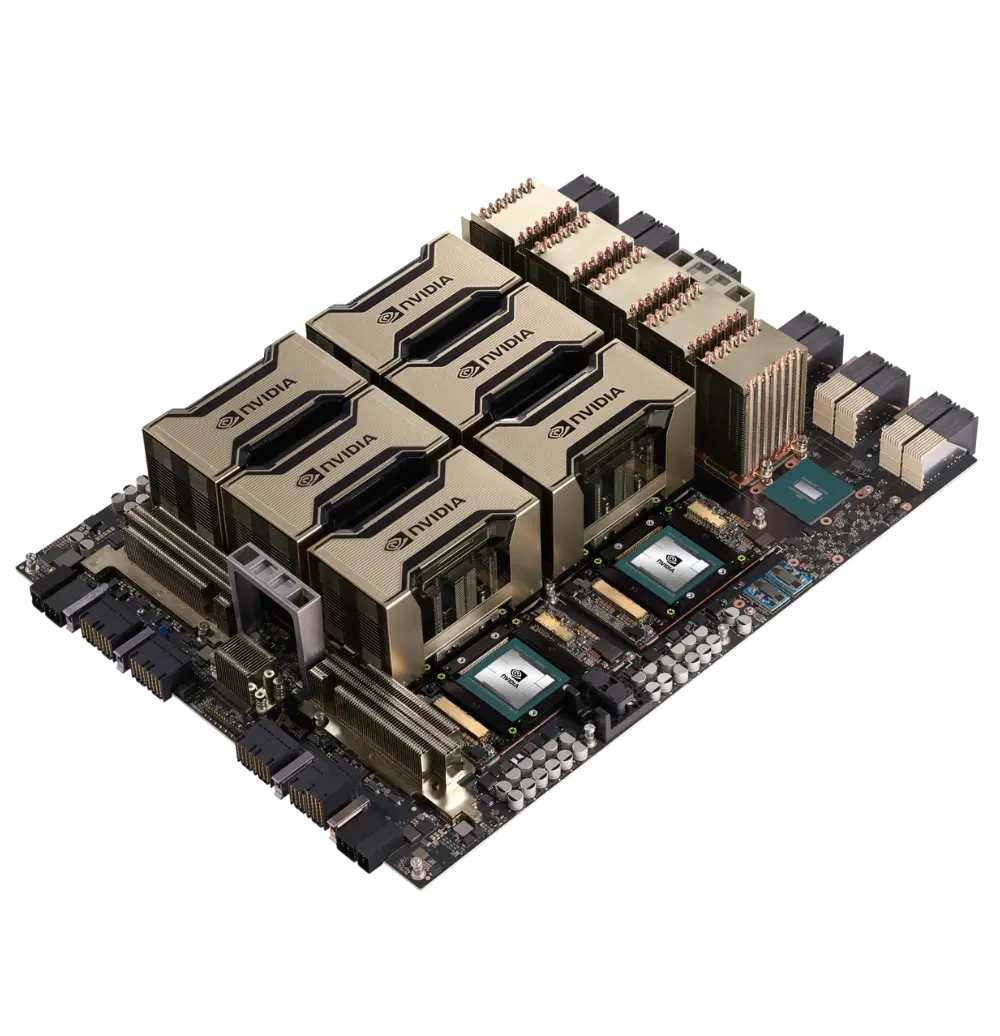

Experience Next-Level Performance with H200 GPUs

OrigoAi will provide Nvidia H200 cloud to clients on first-come reservation basis. The H200 is the first GPU to offer 141 gigabytes (GB) of HBM3e memory at 4.8 terabytes per second (TB/s)—that’s nearly double the capacity of the NVIDIA H100 Tensor Core GPU with 1.4X more memory bandwidth.

Reserve Your H100 Cloud Instance Now

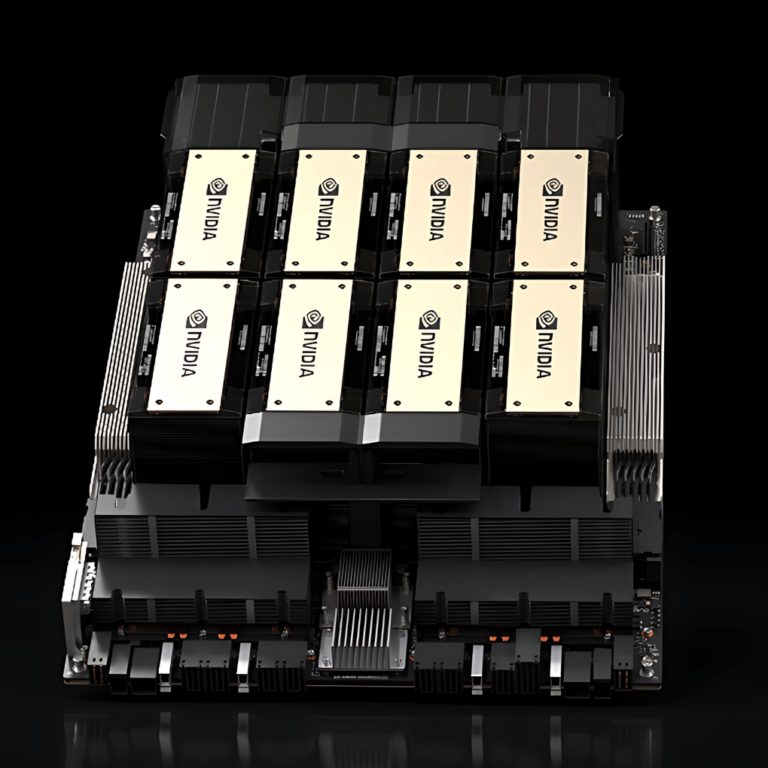

Node Specifications

8x 80GB H100 SXM5 with 640GB of VRAM

2.0 Tb DDR5 RAM with ECC

Latest Intel and AMD Processors

2x 100Gbps Ethernet Storage Fabric

8x 400GbE Infiniband Compute Fabric

Scale beyond 512 Nodes (Contracted Services)

Built for your Enteprise AI Workloads

Rapidly implement tailored security and compliance safeguards across your business and partner networks.

Move fast and with flexibility, streamlining workload distribution and administration, and driving innovation.

Plans that scale with your business

Choose what works for your team – we support various compute plans to fit your enterprise application inference or LLM training needs.

Origo Cloud 32™

Standard Cluster geared towards inference and applications

Origo Cloud Ultra™

Expand Nodes up to 96 (8 GPU Servers) for larger workloads.

Origo Cloud EXO™

Full single-site capacity allocated direct to your AI applications.

OrigoAI Deployments are Powered with

let us Help you Expand your Enterprise AI Projects

Accelerate your HPC & AI workload performance with a subscription to our secure cloud platform featuring Nvidia H100 GPUs.